Every AI coding tool on the market shares the same fundamental flaw: they treat your specifications as context, not as law.

You write detailed requirements. The LLM reads them. It generates code that looks reasonable. You review the output and discover half the specs were ignored, reinterpreted, or silently dropped. The time you saved using AI gets consumed correcting AI.

This is not a tooling problem. It is an architecture problem.

The Trust Gap

When a single LLM is responsible for both understanding requirements and verifying compliance, enforcement does not happen. The model that generates code cannot be trusted to judge whether that code meets your spec. That is the fox guarding the henhouse.

Consider what happens in traditional software delivery. You write acceptance criteria. A CI/CD pipeline runs tests. If tests fail, the build fails. No human decides whether to ship anyway — the gate holds or it does not.

Now consider what happens with AI coding tools. You write requirements in a prompt. The LLM generates code. The same LLM (or you, manually) checks whether the output matches the requirements. There is no gate. There is no binary pass/fail. There is hope.

What Deterministic Means

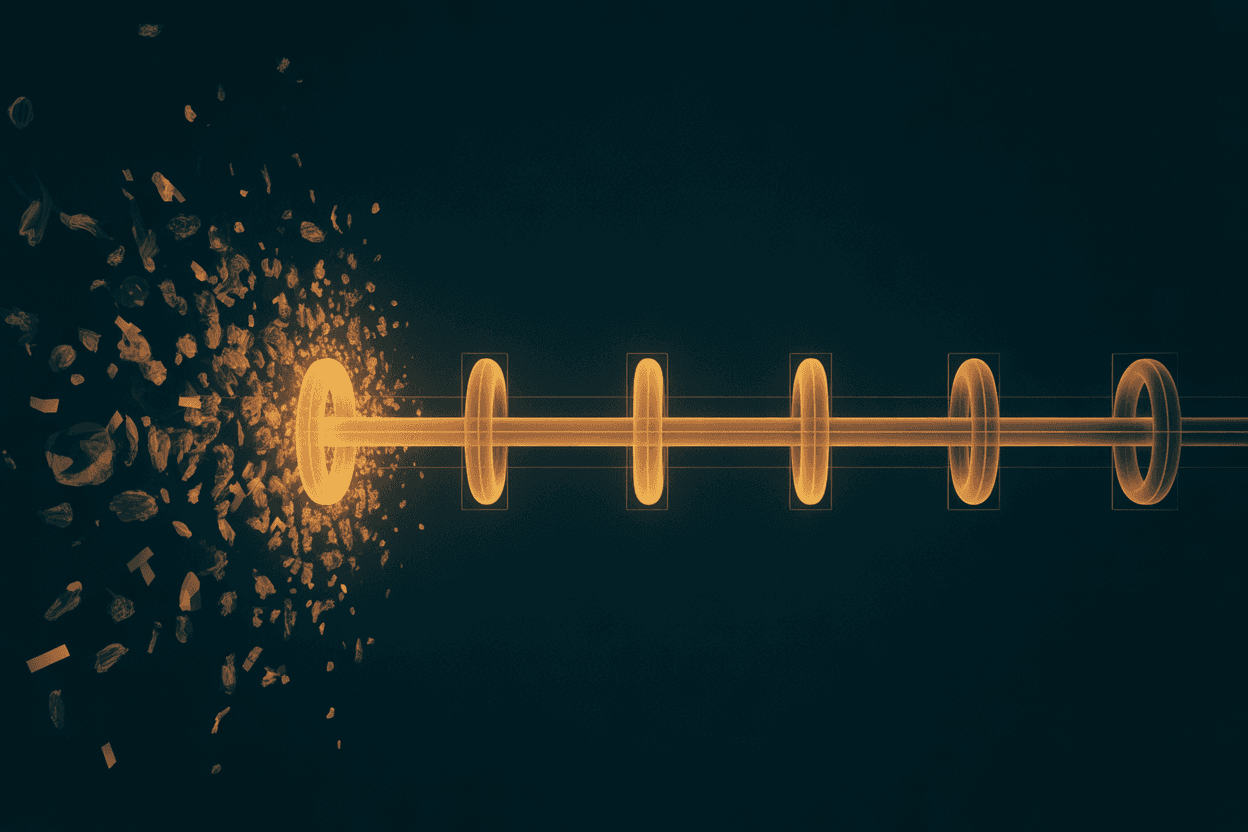

Deterministic AI delivery separates the concerns:

- Code enforces structure. Phase transitions, gate checks, and verification are programmatic. The LLM never decides whether it met the spec.

- LLMs execute substance. The AI writes code, generates content, analyzes problems — the things it is good at.

- State makes it learn. Decisions persist across sessions. The system gets sharper over time, not just faster.

DuranteOS implements this through a 7-phase pipeline called the Algorithm. Every non-trivial request passes through OBSERVE, DEFINE, PLAN, MAKE, and VERIFY phases. At each transition, code inspects the output and returns pass or fail. The LLM is never asked "did you comply?" — code answers that question.

The Economics

You already pay for AI tokens. The question is whether those tokens produce shipped software or rework cycles.

With prompt-based tools, the cost model is: tokens spent generating + tokens spent reviewing + tokens spent correcting + your time reviewing corrections. The correction loop is unbounded because there is no mechanism to prevent it.

With gate-based delivery, the cost model is: tokens spent generating + tokens spent on automated verification. If verification fails, the system retries or aborts. There is no silent degradation. No partial credit.

The gate holds, or work does not ship.

Beyond Autocomplete

The industry frames AI coding tools as productivity multipliers. Write code faster. Generate boilerplate. Autocomplete functions. This framing misses the point.

The bottleneck in software delivery is not typing speed. It is the gap between what was specified and what was shipped. AI can close that gap — but only if specifications are enforced mechanically, not hoped for probabilistically.

Deterministic AI delivery is not about making AI write code faster. It is about making AI write the right code, verified by something other than the AI itself.

That is what DuranteOS builds toward. Not a faster autocomplete. A delivery pipeline where every specification becomes a gate, and the gate holds.